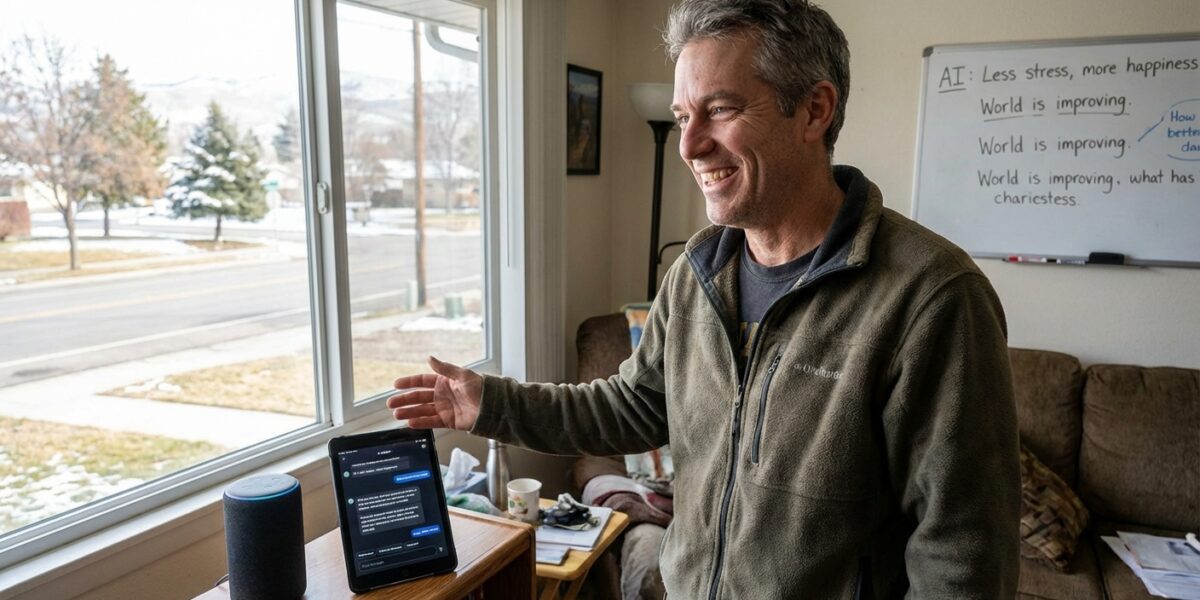

BOISE, ID — Gary Tillman, 52, a retired insurance adjuster and self-described “regular guy who just wants to understand the world,” made the decision eight months ago to stop reading the news and start asking an AI chatbot all his questions about current events, a choice he describes as “the best thing I ever did” and that media literacy experts describe as “a genuinely mixed bag.”

Tillman’s quality of life has improved measurably since the switch. He sleeps eight hours a night. His blood pressure is down. He no longer feels what he used to call “the dread” — the specific sinking sensation he’d experience upon opening a news app and finding seventeen catastrophic things had happened while he was asleep. He reads more. He calls his daughter. He has taken up woodworking.

He is also, according to three separate fact-checkers, incorrect about the sequence of events in a significant international conflict, operating under a misunderstanding about vaccine policy that his doctor describes as “not actively dangerous but not accurate,” and laboring under the impression that a major piece of federal legislation passed last September that did not, in fact, pass last September.

“I feel incredibly well-informed,” Tillman told Supposedly News, speaking from a workshop where he was building a very good-looking birdhouse. “I just ask it things and it tells me. It’s like having a smart friend who always has time to explain.”

The Smart Friend’s Limitations

The AI assistant Tillman uses — he declines to name which one, calling them all “basically the same” in a way that will annoy people who have opinions about AI assistants — operates on a training data cutoff from several months prior to the current date, a limitation that Tillman says “doesn’t matter because most news is just the same stuff happening over and over anyway.”

This is both a philosophically defensible position and completely wrong about specific important things.

“His understanding of the general shape of major geopolitical events is actually pretty solid,” said Dr. Wendy Park, a media literacy researcher at the University of Michigan who agreed to review Tillman’s news comprehension in exchange for a birdhouse. “He grasps trends, contexts, and historical frameworks reasonably well. But there are these pockets — specific, recent, consequential events — where the chatbot has either given him outdated information, confidently incorrect information, or a synthesis that sounds correct but contains a subtle factual substitution that changes the meaning in a significant way.”

“The birdhouse is excellent, by the way,” Dr. Park added.

The Three Things

Supposedly News, with Dr. Park’s assistance, identified the three specific areas where Tillman’s AI-derived understanding diverges meaningfully from documented reality. In the interest of journalistic responsibility, we will describe them in general terms:

First: A timeline inversion involving a significant event that has caused Tillman to believe the cause preceded the effect, which affects how he assigns responsibility for the outcome.

Second: A policy status error that has led Tillman to tell at least four people at his woodworking club something about vaccine guidelines that is approximately 18 months out of date.

Third, and most delicately: A conflation of two different politicians with similar names that has caused Tillman to hold a relatively positive view of a legislator whose actual record, were he to read it, would likely cause him to feel quite differently.

When informed of these three errors, Tillman was quiet for a moment, then asked the AI to explain them.

The AI provided a thorough, confident, well-organized explanation that contained one additional error.

“Hm,” said Tillman.

The Broader Picture

Tillman is not unusual. Surveys suggest that a growing percentage of Americans use AI assistants as a primary or significant news source, drawn by the same qualities that drew Tillman: accessibility, calm presentation, the absence of the sense of impending catastrophe that traditional news media has, through years of diligent effort, successfully embedded in most of its audience.

“The tragedy,” Dr. Park said, “is that Gary is in many ways a better, calmer, more considered thinker than he was when he was doom-scrolling at midnight. He just happens to be calmly and considerately wrong about three things.”

Tillman, for his part, has taken the revelation in stride. He plans to diversify his news sources while keeping the AI chatbot for “background context” and woodworking tips.

“It gave me an excellent design for a dovetail joint,” he said. “That I can verify in real time.”

The birdhouse, confirmed Dr. Park, is structurally sound.

Supposedly News uses multiple sources, a fact-checking process, and a staff that is experiencing the existential implications of reporting this particular story in real time.